Defence Capabilities: UK National Audit Office Review – Why Doesn’t the MOD Get What it Wants?

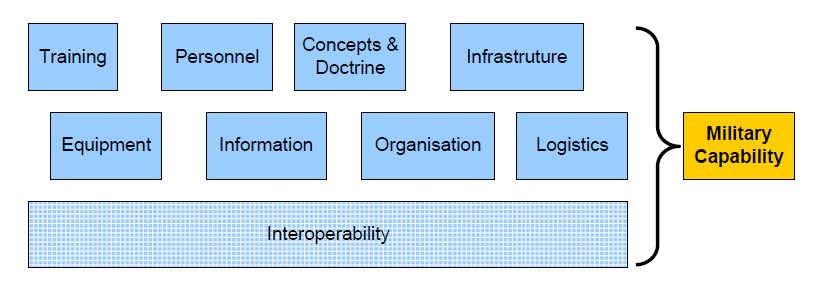

The UK Ministry of Defence rarely deals in equipment or technology anymore; its focus is on capability. That being the blend of, what they call defence lines of development or DLOD: training, equipment, personnel, information, concepts and doctrine, organisation, infrastructure and logistics, with a bonus overarching ‘DLOD’ called interoperability – basically how they all work together. Whilst this may over-complicate matters for some procurements, as it builds in a lot of factors, it does mean that the planners are able to truly understand the readiness of a capability in its entirety. The UK’s National Audit Office (NAO), in their latest report, use the example of a tank: as a piece of equipment, it is of little use unless it has trained personnel as well as maintenance and logistics support throughout its life. Add to that appropriate doctrine and tactics then it becomes an effective fighting capability.

The NAO has recently reviewed what happens when capabilities are identified as part of a delivery programme. In doing so they considered how much they’re delayed and what has caused this, as well as the MOD’s ability to monitor the delivery and learn from issues that arise. The scope of their review includes projects such as F-35 fighter jets and Falcon communications equipment, alongside Watchkeeper UAVs and others that have reached full operating capability.

The headlines of what they’ve found may not be surprising for those that observe these programmes in isolation, but it is concerning that the NAO has found that these are consistent and reoccurring problems.

When considering the delays to delivery, they found that nearly one-third of the most significant capabilities have major risks for delivery on time. There is late and/or faulty equipment from the supplier and that this is a reoccurring problem. The Command (Army, Air, Navy, Strategic) and delivery teams lack capacity and skills so they can’t deliver the capability, with as much as 20% shortfall in team staffing.

A specific DLOD – ‘training’ – is identified as being a weakness for delivery, which is a particular concern when everything up to that point may have gone well. Finally they do, of course, raise funding as an issue across individual programmes and the portfolio as a whole.

Managing the key milestones of a programme, the NAO identifies that the MOD actually reports completion of a milestone yet the intended capability doesn’t have to be delivered at that point – this is one of the inherent problems of performance management. Sometimes the milestones aren’t easily measurable to know what they meant in the first place.

This all has an impact on monitoring and means that MOD Head Office can’t monitor the whole process and thus can’t hold the individual Commands to account. Even if they could, the Commands have different levels of maturity in their own portfolio management arrangements so they can’t be compared against each other, meaning they can’t learn from each other for best effect.

To transform (a popular MOD term at the moment) how capabilities are delivered, the MOD sets out programmes, yet the NAO found that these wouldn’t actually resolve some of the recurring issues, they’re also not coordinated across the different Commands so may be run at different speeds (as seen with maturity issues) and also conflict with each other at particular points. Many of the issues are blamed on affordability, with funding behind the programmes, yet it seems from the NAO’s report that there are much more fundamental issues with how the MOD is managing the funds it has got and ensuring that they achieve Value-for-Money.

As with all of the NAO’s reports, they identify a series of recommendations and they seem quite fundamental to running a good business. Definitely a concerning area! Hopefully one the UK’s MOD improve on soon.